How to Choose the Best Web Scraping Service for E-Commerce (2026)

- Raquell Silva

- 7 days ago

- 8 min read

Choosing the best web scraping service for e-commerce means evaluating providers across eight core criteria: data accuracy (specifically Usable Record Rate), uptime and reliability, anti-bot capability, scalability, legal compliance, delivery flexibility, pricing transparency, and customer support quality. For most e-commerce companies where competitor pricing data drives revenue decisions, a fully managed service is the right fit. It eliminates the technical overhead and failure modes that matter most during peak trading periods.

The decision carries more financial weight than it might initially appear. A McKinsey analysis of dynamic pricing in retail found that retailers who adopt data-driven dynamic pricing consistently see sales growth of 2–5% and margin improvements of 5–10%. On the flip side, Gartner estimates that poor data quality costs the average organization $12.9 million annually.

At Ficstar, we've spent over 20 years building competitive pricing data pipelines for enterprise retailers. We've seen what separates providers that deliver real value from those that create expensive, ongoing headaches. This guide covers the evaluation criteria that matter most, the red flags that should stop a deal, and the questions worth asking before signing any contract.

Why E-Commerce Runs on Scraped Data

Amazon changes product prices approximately 2.5 million times per day, roughly once every 10 minutes. Over 83% of Amazon sales flow through the Buy Box, where competitive pricing is the single biggest factor in visibility.

This is the environment every online retailer now competes in: a marketplace where pricing is fluid, inventory shifts hourly, and the businesses with fastest access to competitor intelligence win.

According to Market.us industry research, retail and e-commerce account for 36.7% of total web scraping end-user activity, with price monitoring and dynamic pricing alone making up 25.8% of all scraping applications. An estimated 82% of e-commerce companies now use web scraping to collect publicly available data, a figure that has grown sharply in recent years.

The use cases extend well beyond pricing:

MAP compliance monitoring: Catching unauthorized sellers advertising below minimum prices

Product data enrichment: Descriptions, specs, images, and reviews across platforms

Competitor assortment tracking: Identifying catalog gaps and expansion opportunities

Stock-level monitoring: Real-time inventory alerts tied to competitor availability

Market trend analysis: Demand forecasting and seasonal intelligence

The Three Types of Web Scraping Services

Before evaluating individual providers, it helps to understand how the market is structured. Web scraping services fall into three broad categories, each suited to different organizational needs.

Self-Service Tools | Managed Services (e.g., Ficstar) | Hybrid Platforms | |

Setup effort | High – requires developer resources | Minimal – provider handles everything | Moderate – pre-built tools with optional support |

Data accuracy (URR) | Variable; depends on internal QA | High; dedicated QA teams, 50+ validation checks | Moderate; automated QA, limited human review |

Anti-bot handling | Basic unless significant proxy infrastructure is built | Advanced: rotating proxies, CAPTCHA solving, fingerprint evasion | Varies; enterprise features often cost extra |

Scalability | Limited by internal engineering bandwidth | Enterprise-grade; millions of pages per hour | Good for moderate volumes |

Maintenance burden | High – site changes require constant scraper updates | Zero for the client; provider handles proactively | Low to moderate |

Compliance | Client bears full responsibility | Provider manages compliance documentation | Shared responsibility |

Best for | Technical teams with development resources | Enterprises needing production-grade data without internal overhead | Mid-market companies with some technical capacity |

Typical cost | Low upfront; $1–2M/year at scale for in-house teams | $5K–$50K+ per project; no maintenance costs | $30–$2,500+/month depending on volume |

For e-commerce companies where pricing intelligence directly drives revenue, the fully managed model eliminates the operational risks that tend to compound exactly when they cause the most damage: peak season, flash sales, and competitive price wars.

Eight Criteria for Evaluating a Web Scraping Provider

Choosing a web scraping service is not a feature-checklist exercise. The real differentiators emerge under production conditions. Here are the eight dimensions that matter most.

1. Data Accuracy (Usable Record Rate)

Raw success rates vary from roughly 96% to 99.96% across leading APIs, but success rates alone are a misleading metric. The better measure is the Usable Record Rate (URR): the percentage of delivered records that pass quality checks including deduplication, null thresholds, and validity rules.

A vendor delivering 99% URR at a slightly higher per-record cost beats one delivering 80% URR at a lower sticker price, because cost-per-usable-record is what actually drives ROI.

At Ficstar, every data file goes through 50+ QA checks, including regression testing and AI anomaly detection, before delivery. That shifts the quality burden entirely off the client's internal team.

2. Reliability and Uptime

Enterprise-grade SLAs typically guarantee 99.5% to 99.9% uptime, translating to between 43 minutes and 3.6 hours of monthly downtime. But uptime alone is an incomplete picture.

What matters equally is mean time to repair (MTTR) when a scraper breaks, and whether the provider detects site structure changes before bad data enters your pipeline. Proactive monitoring is the differentiator here. Most providers detect failures after the fact. The better approach is automated monitoring that identifies when a target website has changed structure and updates crawlers before extraction quality degrades.

3. Anti-Bot Bypass Capability

Bot traffic has become a defining feature of the modern web. Cloudflare's Application Security Report found that approximately 31% of all application traffic it processes is automated bot traffic, a figure that has remained consistent for several years. Cloudflare alone protects over 19 million active websites, and in mid-2025 introduced adaptive challenges based on behavioral anomalies that cut success rates for unprepared scrapers by 30%.

Effective providers deploy rotating residential proxies, headless browser rendering, CAPTCHA-solving mechanisms, and browser fingerprint management. This is not a static capability. Anti-bot systems evolve constantly, and a provider relying on techniques that worked three years ago will fail against modern defenses.

4. Scalability Under Pressure

E-commerce scraping demand is inherently spiky. Peak season, flash sales, and competitive price wars all create sudden volume surges. Hidden costs often emerge at exactly these moments: emergency proxy pool expansions, throttling surcharges, and degraded accuracy under load.

Ask any prospective provider directly: how do you handle volume spikes, and what costs are triggered when they occur? The answer reveals more than any sales pitch will.

For context on the scale involved in serious enterprise web scraping: we've run projects collecting tire pricing and shipping data from 20 major competitors across hundreds of U.S. ZIP codes simultaneously, and scraped tiered pricing for 700,000+ electronic parts across distributors and manufacturers. These are the kinds of workloads that break template-based tools.

5. Legal and Compliance Posture

The legal landscape for web scraping has clarified significantly in recent years. The Ninth Circuit's hiQ v. LinkedIn ruling established that scraping publicly accessible data does not violate the Computer Fraud and Abuse Act. The 2024 Meta v. Bright Data decision reinforced this for social media platforms.

However, real legal risk remains in specific scenarios: scraping behind login walls, collecting personal data without GDPR/CCPA compliance, and overwhelming servers with aggressive request rates. The 2024 Ryanair v. Booking.com verdict showed that scraping with intent to resell can also trigger liability.

Responsible providers publish clear compliance documentation, maintain audit logs, and offer Data Processing Agreements. Our approach at Ficstar focuses exclusively on publicly accessible data, with alignment to Canadian and global data regulations.

6. Data Delivery and Integration Flexibility

Standard offerings include JSON, CSV, and XML, but the real question is whether the provider can deliver data directly into your existing systems (ERP platforms, pricing engines, data warehouses, BI dashboards) without manual transformation.

Schedule flexibility matters too. For competitive price monitoring, you may need hourly updates during a price war and weekly updates for slower-moving categories.

Our data extraction services deliver in CSV, Excel, JSON, XML, HTML, SQL, and via API integration, on schedules ranging from hourly to monthly. Data arrives cleaned, deduplicated, and normalized, ready for immediate system ingestion.

7. Pricing Transparency

Web scraping pricing models vary widely: pay-per-request, subscription tiers, credit-based systems, and custom enterprise contracts all exist.

The critical metric to evaluate is cost per usable record, not cost per request.

Hidden costs to probe for:

Maintenance fees when target sites change structure

Scaling surcharges during high-volume periods

Compliance overhead for GDPR/CCPA audits and logging

Building an in-house scraping team at scale typically runs $1–2 million annually, with 60–70% consumed by maintenance alone. Fully managed projects offer a different value calculation once that baseline is established.

8. Customer Support Quality

Look for dedicated project managers, real-time dashboards, proactive monitoring with automated alerts, and documented incident response processes. Red flags include limited support hours, no dedicated technical contact, and vague SLA language around response times.

Red Flags That Should Stop a Deal

Beyond the core criteria, experienced buyers consistently flag the same warning signs:

Vague anti-bot explanations. If a provider can't clearly explain how they handle Cloudflare or DataDome, they probably rely on basic techniques that will fail.

No verifiable client references or published case studies. Limited real-world evidence usually indicates limited real-world experience.

Rigid contracts without pilot project options. A provider confident in their work will let you verify it before a long-term commitment.

No proactive monitoring. Scrapers can break silently for days. Without automated alerting, corrupted data enters your pricing models without warning.

Low sticker price without URR transparency. A provider advertising low per-request costs without disclosing usable record rates may be the most expensive option in practice.

Thomas Redman, Harvard Business Review contributor and president of Data Quality Solutions, has estimated that most organizations lose between 15–25% of revenue due to bad data. In the scraping context, inaccurate competitor pricing data doesn't just waste analyst time. It drives pricing decisions that directly erode margins.

Questions to Ask Before Signing

Use these questions to pressure-test any provider during the sales process:

What is your average Usable Record Rate across e-commerce projects?

How quickly do you detect and fix scrapers when a target site changes structure?

How do you handle Cloudflare-protected websites specifically?

What happens to pricing and delivery SLAs during volume spikes?

Can you provide compliance documentation and a Data Processing Agreement?

What does the client relationship look like post-launch? Who is our dedicated contact?

Can we run a pilot project before committing to a long-term contract?

The answers reveal far more than any feature sheet.

How to Match Provider Type to Your Situation

The right service model depends primarily on your internal technical capacity and the criticality of pricing data to your business.

If your team has dedicated data engineering resources and moderate scraping needs, a self-service platform may be a reasonable starting point. The tradeoff is ongoing maintenance burden that grows as anti-bot systems become more sophisticated.

If your organization makes material pricing decisions based on competitor data, and you don't have the engineering bandwidth to maintain a scraping infrastructure, a fully managed competitor price monitoring service eliminates the operational risks that matter most: scraper breakage during peak periods, silent data degradation, and the accumulated cost of internal maintenance.

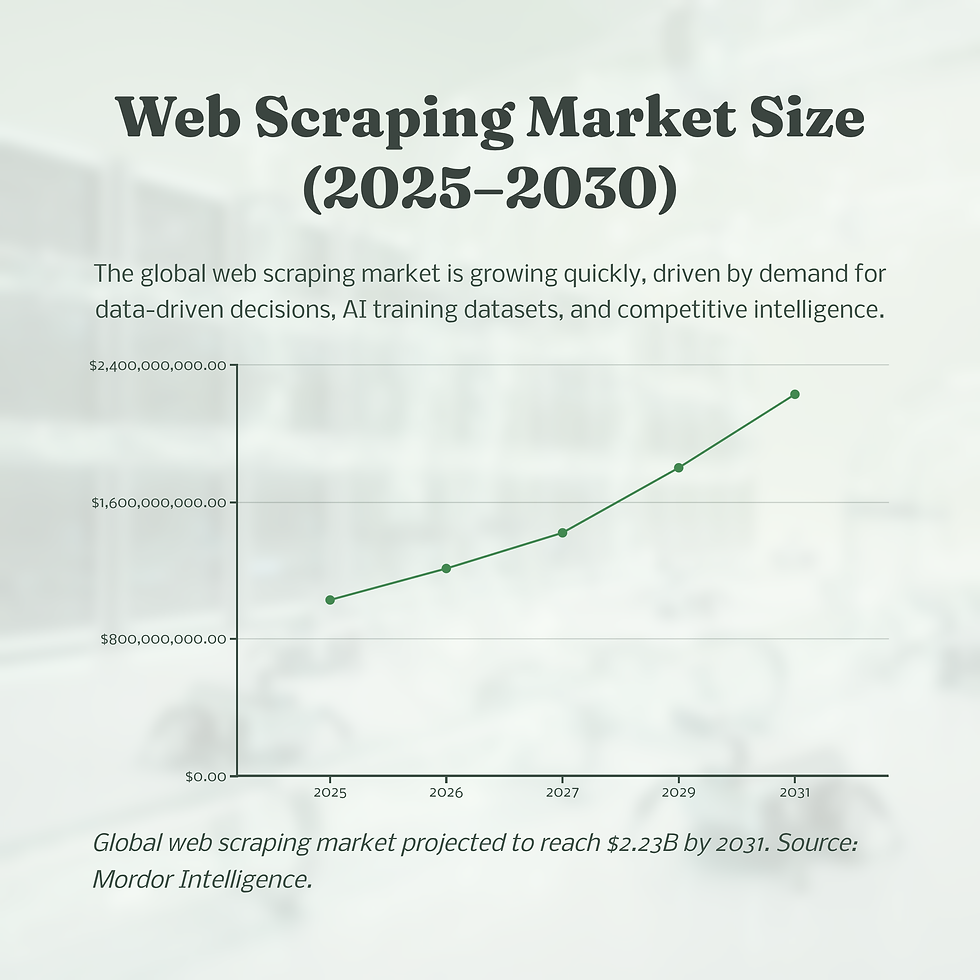

The market is growing fast. According to Mordor Intelligence, the web scraping market reached approximately $1.03 billion in 2025 and is projected to grow to $2.23 billion by 2031. More relevant to e-commerce operators: the technical barrier to successful scraping is rising in parallel. The providers with serious infrastructure will increasingly separate from those relying on commodity techniques.

For e-commerce companies evaluating this decision, the real risk is not overspending on a scraping provider. It's underspending on one that delivers unreliable data into your pricing engine at the moment it matters most.

Ready to Talk About Your Specific Requirements?

Every pricing intelligence project is different. If you're evaluating web scraping providers for e-commerce and want to understand what a fully managed approach looks like for your catalog size, competitor set, and update frequency, contact our team at Ficstar. We'll walk through the scope, provide transparent pricing quickly, and let the work speak for itself.

We back that with a 100% satisfaction guarantee, a free trial with actual data collection (not just a demo), and client relationships that span 10+ years. We've worked with organizations across retail, automotive, financial services, hospitality, and more.

Comments